Table of Contents

Introduction

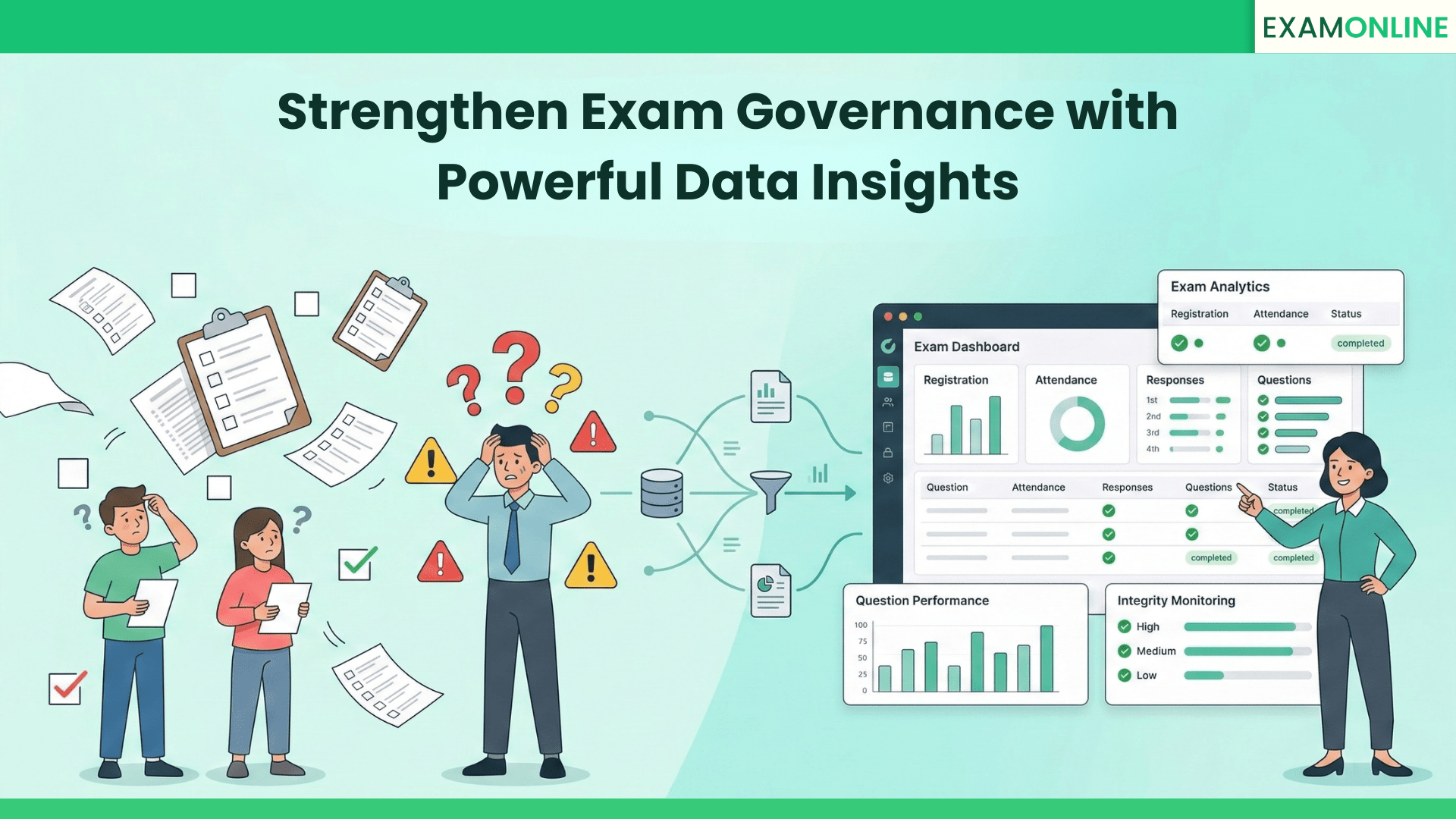

An online exam is not just a delivery mechanism for assessments. It is a data-generating system that supports the full assessment lifecycle – from registration to certification.

Every stage of an online exam – registration, attendance, response activity, question performance, and integrity monitoring – produces structured information. Institutions that analyse this data move from reactive exam administration to proactive governance.

Without structured insights, an online exam is an event. With structured insights, it becomes a controlled system.

This article explains why online exam insights matter, how they are structured across the exam lifecycle, and how institutions use them to improve academic quality, operational efficiency, and risk management.

Why Online Exam Insights Matter in Institutional Governance

Most institutions focus on conducting online exams securely. Fewer evaluate how exam data can improve processes and governance.

Online exam reports serve three strategic purposes:

- Academic improvement

- Operational control

- Governance defensibility

Academic improvement depends on question-level analytics and performance trends. Operational control depends on attendance, registration, and system readiness visibility. Governance defensibility depends on traceable logs and documented review workflows.

Institutions operating a structured online exam framework use post-exam analytics not merely for reporting, but for policy refinement.

Online exam insights convert activity into accountability.

Each exam generates reports across three stages:

- Pre-exam reports

- In-exam reports

- Post-exam reports

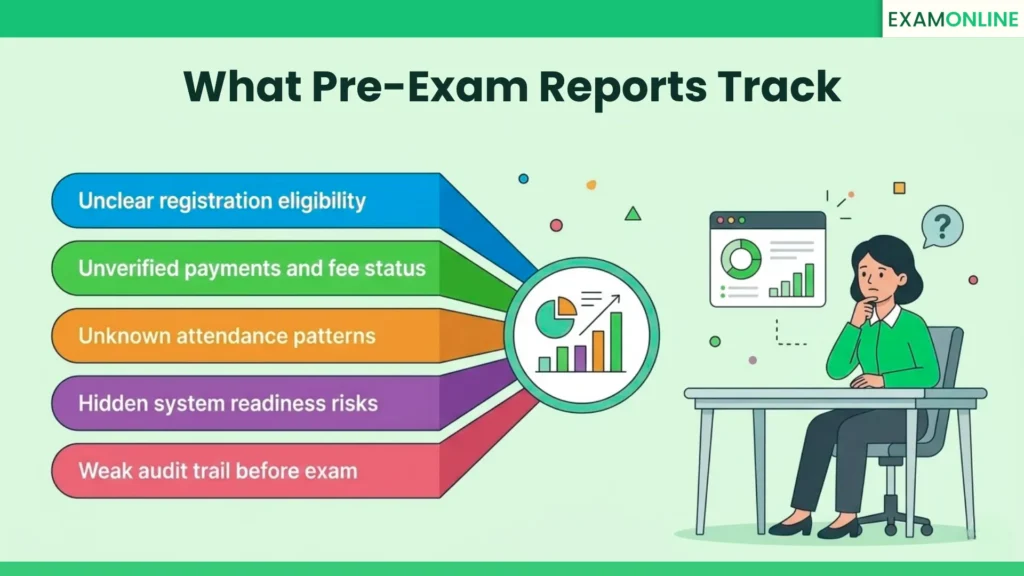

Pre-Exam Reports – Establishing Control Before Execution

Before an online exam begins, institutions must confirm readiness, eligibility, and technical compliance. Pre-exam reports reduce uncertainty and prevent operational breakdown.

Registration and Payment Status Reports

When online exams use structured registration forms with payment workflows, the system captures eligibility and financial validation data.

Registration reports typically include:

- Application completion status

- Payment confirmation or failure

- Time-stamped submission records

- Candidate segmentation based on registration and payment status

These reports allow institutions to reconcile financial records, detect incomplete registrations, and prevent unauthorized participation.

In large-scale assessments, registration analytics reveal drop-off patterns and peak submission windows. This improves forecasting and reduces last-minute congestion.

Without structured registration reporting, exam governance weakens at its foundation.

Attendance Reports in Online Exams

Attendance in an online exam environment is more than login confirmation. It is time-bound participation validation.

Attendance reports capture:

- Login timestamps

- Exam start confirmation

- Completion status

- Absentee identification

This data becomes critical during result disputes or audit reviews.

Across multiple exam cycles, attendance analytics reveal recurring absentee groups, slot inefficiencies, and unusual submission behaviour.

Attendance data protects institutional credibility.

System Check and Readiness Reports

Many online exam platforms initiate system validation once an exam is scheduled. These reports track device compatibility, browser validation, webcam functionality, and network stability.

System check analytics:

- Prevent technical disruptions during the exam

- Provide predictive insight into candidate readiness

Bulk readiness reports help institutions identify recurring technical issues across cohorts. Over time, this reduces support escalation and improves communication strategies.

When aligned with broader exam management system features, readiness becomes embedded within governance workflows.

Pre-exam reporting establishes operational stability before monitoring begins.

In-Exam Reports – Monitoring Behaviour and Performance Patterns

During an online exam, structured logs capture candidate interaction behaviour. These logs form the foundation for performance transparency and risk assessment.

Exam Activity and Response Logs

A comprehensive online exam report includes the answer sheet, activity log, and response history.

Activity logs record the following with timestamps:

- System check log

- Navigation order

- Time spent per question

- Answer changes

Response logs capture chronological modifications to submitted answers.

These insights allow institutions to detect abnormal patterns such as excessive answer switching or disproportionate time allocation.

When interpreted within a structured review process, activity logs support fair evaluation and defensible result decisions.

Institutions applying strategic exam management integrate these behavioural insights into formal review protocols.

Score Reports and Section-Level Analytics

Score reports in modern online exam systems extend beyond total marks.

They provide:

- Section-wise performance breakdown

- Topic-level accuracy

- Difficulty-based performance variation

This data reveals curriculum alignment gaps and supports recalibration of question design.

Section-level analytics also support fairness audits by confirming scoring consistency across batches.

Online exam score insights strengthen academic credibility when reviewed systematically.

Post-Exam Reports – Improving Academic Quality and Standardization

The most powerful online exam insights emerge after submissions are complete.

Post-exam analytics help evaluate exam design, question calibration, and scoring consistency for future improvements.

Question Performance and Difficulty Calibration

Question bank analysis reports reveal how individual questions perform across the candidate pool.

Institutions analyse:

- Attempt rates per question

- Correct versus incorrect response ratios

- Average time spent

- Difficulty consistency

These metrics help identify ambiguous questions, ineffective distractors, and inconsistent calibration of question difficulty.

Topic-level aggregation reveals systemic knowledge gaps. Over multiple exam cycles, this enables evidence-based syllabus adjustments.

Question analytics ensure that online exams remain fair, standardised, and defensible.

Question Bank Activity Logs and Traceability

Governance does not stop at performance. It extends to content control.

Question bank activity reports track:

- Authorship

- Review history

- Modification logs

- Access records

Traceability ensures:

- Clear separation of duties

- Accountability in question approval

- Prevention of unauthorized edits

- Audit readiness

Institutions undergoing accreditation or compliance review depend on documented traceability.

Without structured logs, question integrity remains vulnerable to scrutiny and leak investigations become difficult.

Proctoring and Integrity Reports in Online Exams

Monitoring during an online exam generates behavioural and compliance data. The real value lies in structured interpretation.

Integrity reports must feed into institutional risk frameworks.

Incident Analysis and Violation Categorization

A comprehensive online exam proctoring report typically records:

- Type of violation

- Timestamp of occurrence

- Supporting screenshot evidence

- Duration of violation

- Overall fairness score

These reports help institutions differentiate minor behavioural deviations from significant violations.

The objective is not excessive enforcement. The objective is proportionate response.

When analysed across exam cycles, violation categorisation helps refine thresholds and standardise review protocols.

Integrity reporting strengthens online exam defensibility when classification models and review workflows are consistent.

Using Fairness Scores for Structured Risk Management

Fairness scoring systems allow institutions to evaluate exam-level risk exposure.

When aggregated across candidates and sessions, integrity analytics reveal:

- Repeat behavioural patterns

- Exams or sections with high violation density

- Operational vulnerabilities

This shifts governance from individual discipline to systemic improvement.

Online exam integrity insights create value only when reviewed at scale.

Operational and Financial Reports in Online Exam Management

An online exam is also an operational and financial workflow. Reporting must extend beyond academic performance.

Slot Management and Capacity Utilization Reports

When online exams allow slot booking, institutions analyse:

- Slot capacity utilization

- Peak booking windows

- Underutilized time slots

- Rescheduling patterns

Slot analytics improve demand forecasting and resource planning.

Operational insights strengthen scalability.

Payment and Transaction Reporting

When online exams include integrated payment gateways, financial traceability becomes critical.

Payment reports track:

- Successful transactions

- Failed attempts

- Exam level payments

- Use of discount coupons and vouchers

These reports support compliance and revenue reconciliation.

Financial analytics reduce disputes and strengthen administrative transparency.

Operational reporting aligns assessment governance with institutional accountability.

Bulk and Cross-Exam Analytics

Individual exam reports provide tactical insights. Bulk reporting creates strategic visibility.

Institutions conducting recurring or high-volume online exams must analyse data across multiple exam cycles.

Cross-Exam Performance Trends

Bulk analytics allow institutions to compare:

- Pass rates across sections or batches

- Topic-level performance trends

- Difficulty consistency over time

- Instructional effectiveness

Trend analysis strengthens standardisation and long-term academic planning.

Institutions embedding analytics into a structured exam management governance model reduce dependence on isolated exam outcomes.

From Online Exam Data to Governance Framework

Collecting online exam reports is not governance.

Data becomes valuable only when institutions define structured review processes with ownership, timelines, and escalation standards.

Establishing Review Ownership and Timelines

Institutions must clearly define:

- Who reviews attendance and registration discrepancies

- Who analyses question performance trends

- Who validates integrity incidents

- Who authorizes escalations

High-stakes online exams require post-exam analytics to be reviewed within defined service-level agreements.

Governance maturity is reflected in accountability clarity.

Standardising Escalation and Evidence Retention

Online exam insights must feed structured escalation models.

For example:

- Minor behavioural flags may require documentation

- Repeated violations may require committee review

- Financial discrepancies may require reconciliation

Each escalation category should be supported by documented evidence from activity logs and proctoring reports.

Evidence retention policies must be standardised. Institutions conducting certification, recruitment, or regulatory assessments cannot rely on temporary storage.

When structured review becomes institutional practice, online exams move from event-based execution to controlled systems.

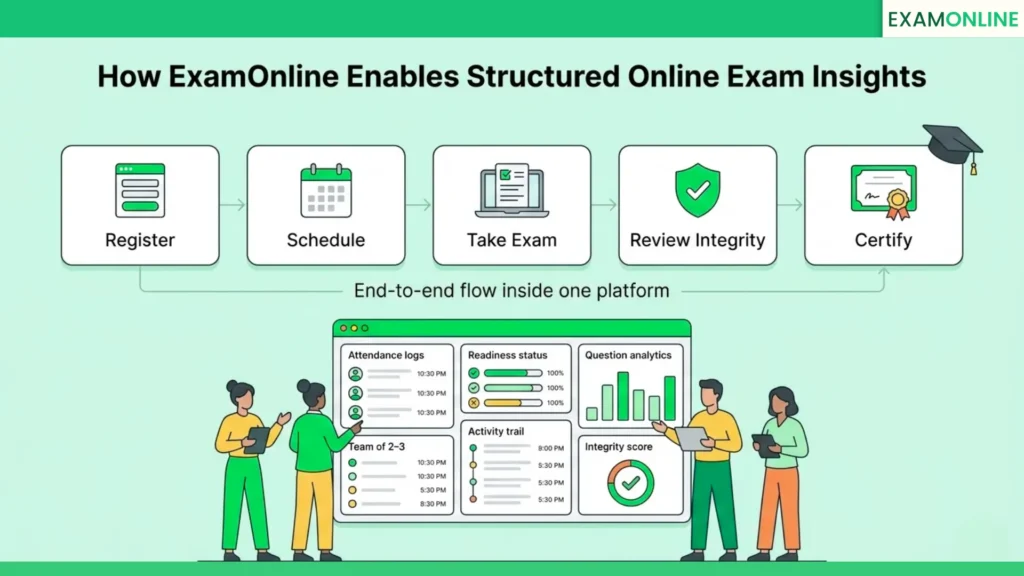

How ExamOnline Enables Structured Online Exam Insights

ExamOnline supports end-to-end online exam workflows across 25+ countries and 250+ organisations. The platform manages registration, slot booking, payment integration, identity verification, exam delivery, and certificate generation with automatic distribution within a unified framework.

ExamOnline provides structured dashboards with access to attendance logs, readiness reports, answer sheets, activity trails, question analytics, integrity reports, and bulk cross-exam performance data. Each record is time-stamped and traceable, supporting audit readiness and dispute defensibility.

Institutions can review operational, academic, and compliance insights without exporting fragmented data across systems. This centralised structure strengthens governance across the entire exam lifecycle.

Explore the complete online exam platform to understand how structured reporting integrates with delivery and certification workflows.

Online exams do not become defensible by default – they become defensible when institutions actively interpret and govern the data they generate.

Frequently Asked Questions (FAQ)

What are online exam insights?

Online exam insights refer to structured analytics generated across registration, attendance, activity logs, question performance, integrity monitoring, and financial workflows.

Why are online exam reports important for accreditation?

Accreditation bodies require traceable documentation. Online exam reports provide evidence of attendance validation, scoring transparency, and structured incident review.

How do question bank analytics improve exam quality?

Question performance data reveals calibration gaps and topic-level knowledge weaknesses, enabling evidence-based improvements.

Can online exam analytics reduce disputes?

Yes. Structured activity logs and transaction records provide defensible documentation during result or payment disputes

How often should institutions review online exam reports?

High-stakes institutions review reports after every exam cycle and conduct bulk trend analysis periodically.