Table of Contents

Introduction

Olympiad exams are not standard assessments delivered at scale. They are competitive programs where candidates are ranked against each other across locations, and outcomes directly influence progression, recognition, or selection.

This changes the responsibility of the organiser.

When results are published, they are not just outcomes – they are claims. Claims that the process was fair, that every candidate was evaluated under the same conditions, and that no unfair advantage influenced rankings.

Online olympiad exam management is the structured approach to delivering these programs while ensuring those claims can be defended. This requires more than delivery infrastructure. It requires control, traceability, and governance at every stage of the exam lifecycle.

What Makes Online Olympiad Exam Management Uniquely Demanding

Scale is Not Volume – It is Synchronized Execution

In most exams, scale is treated as a volume problem. In olympiads, it is a simultaneity problem.

Candidates are not just large in number. They are distributed across regions and often take the exam within the same window. This creates a requirement for systems that can handle thousands of concurrent sessions without degradation in performance or monitoring quality.

A platform that works well for asynchronous assessments may fail under synchronized load. Delays, session drops, or monitoring failures during peak concurrency directly affect fairness.

This is why olympiad delivery requires infrastructure built for parallel execution, not sequential scaling.

Fairness is Relative, Not Absolute

In a standard exam, a candidate’s score stands independently. In an olympiad, it does not.

Every candidate is evaluated relative to others. This means even a small integrity failure – one candidate receiving assistance, accessing external resources, or bypassing restrictions – affects the entire ranking system.

The implication is critical.

Integrity is not just about preventing cheating. It is about preserving relative fairness across the cohort. If one session is compromised, the impact is distributed across all participants.

This is what makes olympiad integrity a system-level requirement, not a candidate-level concern.

Post-Exam Operations Are Governance Decisions

Once the exam ends, the nature of work changes.

What appears operational – result release, complaint handling, certificate issuance – is actually governance.

Releasing results means asserting that the exam process was fair. Handling complaints requires evidence, not assumptions. Issuing certificates means formally validating the outcome.

At scale, manual reconciliation across systems becomes unreliable. If identity data, session logs, and incident records are not connected, the organiser cannot reconstruct what happened during the exam.

This is where most failures occur – not during delivery, but during post-exam defensibility.

The Integrity Gap in Large-Scale Competitive Exams

The biggest risk in olympiad exams is not visible during execution. It appears after the results are released.

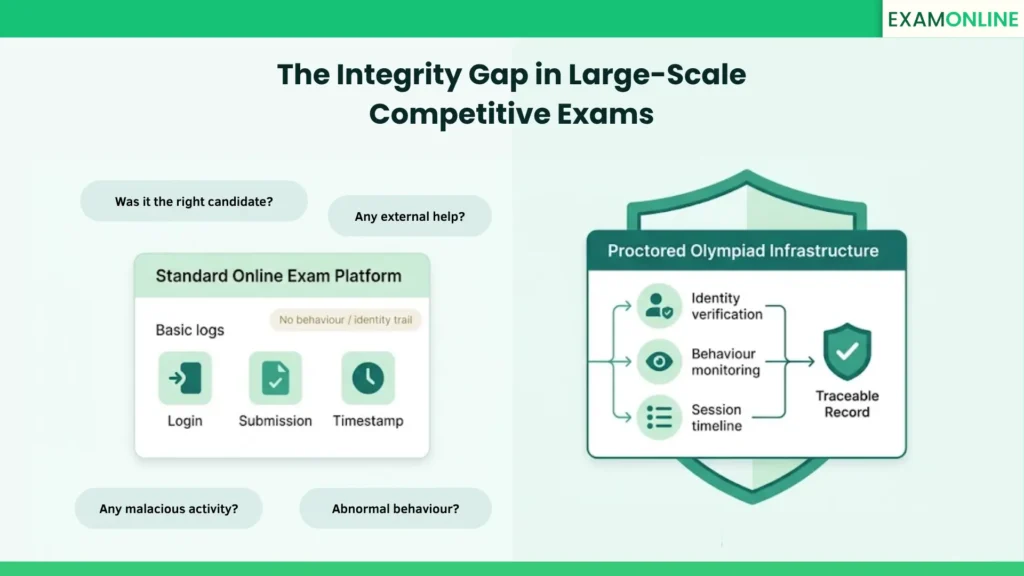

A standard online exam platform records events – login, submission, timestamps. But it does not create a behavioural or identity-linked audit trail.

This creates a structural gap.

If a result is challenged, the organiser cannot answer key questions:

- Was the registered candidate present throughout the exam?

- Was there external assistance?

- Did any abnormal behaviour occur during the session?

Without this evidence, decisions become subjective.

This is where proctoring infrastructure becomes essential. It does not just monitor candidates. It connects identity, behaviour, and session activity into a single traceable record.

At a small scale, this gap may go unnoticed. At large scales, it compounds.

An integrity failure affecting a few sessions becomes a program-level credibility issue when multiplied across thousands of candidates.

Olympiad Proctoring Models: Choosing the Right Approach

AI Proctoring Enables Scale Without Losing Visibility

AI-based proctoring is designed for environments where candidate volume is high and manual monitoring is not feasible.

It continuously analyses behavioural signals such as gaze direction, face presence, audio activity, and object detection. Instead of intervening immediately, it flags anomalies and creates a structured incident log.

This log becomes critical after the exam.

It allows organisers to:

- Review only flagged sessions instead of all sessions

- Apply consistent evaluation criteria

- Maintain evidence for every decision made

The value of AI proctoring is not just real-time detection. It is post-exam traceability at scale.

Live Proctoring Adds Intervention and Accountability

In high-stakes rounds, detection alone is not sufficient. Intervention becomes important.

Live proctoring introduces human oversight into the process. A trained proctor can observe behaviour, communicate with the candidate, and take immediate action if required.

This reduces ambiguity in decision-making.

It also strengthens defensibility. A flagged session supported by human observation carries more weight than automated detection alone.

For final rounds, where outcomes are critical and candidate volume is lower, this added layer becomes necessary.

Remote Proctoring Redefines Infrastructure Requirements

Olympiad exams no longer depend on physical centres, but this does not reduce infrastructure complexity. It shifts it.

Instead of controlling a physical environment, the platform must control a distributed environment.

This includes:

- Verifying candidate identity remotely

- Monitoring behaviour across different devices and locations

- Ensuring consistency despite varied network conditions

Proctored exams act as the system that standardises this variability and ensures uniform enforcement of rules.

Online Olympiad Exam Management Process: End-to-End

The olympiad lifecycle is a sequence of interdependent steps. Each step builds the foundation for the next.

Scaling an olympiad exam requires that this sequence is system-driven, not manually coordinated.

Registration and Identity Setup Defines the Baseline

Identity verification must happen at the start, not at the exam. If identity is only checked during the session, there is no prior reference point. Early verification creates a baseline record that the system can continuously validate against.

This reduces impersonation risk and ensures consistency across the lifecycle.

Slot Booking Controls Load and Candidate Experience

Slot booking is not just a scheduling feature. It is a load management mechanism. Without controlled slot allocation, candidate spikes can overload systems, affecting both performance and monitoring accuracy.

A structured booking system distributes sessions evenly and ensures stable execution.

Pre-Exam Validation Prevents Downstream Failures

Most technical complaints originate before the exam begins. Device incompatibility, missing permissions, or unsupported browsers create disruptions during the session. A pre-exam validation layer eliminates these variables early.

This reduces both candidate frustration and operational overhead during the exam window.

Session Monitoring Creates the Core Evidence Layer

During the exam, every interaction must be captured and linked. Monitoring is not just about flagging suspicious behaviour. It is about creating a continuous record of the session that can be analysed later.

Each flag, timestamp, and behavioural signal contributes to a defensible audit trail.

Incident Review Determines Result Validity

Results should not be released immediately after submission. Flagged sessions must be reviewed in context. Patterns must be analysed. Decisions must be documented.

This step converts raw monitoring data into governance outcomes. Without it, monitoring has no value.

Certification Completes the Accountability Loop

Certificates are not just acknowledgements. They are formal validations of performance. At scale, manual generation introduces risk of mismatch, delay, or error.

Automated certification ensures that only verified results are issued, maintaining consistency and reliability.

Pre-Launch Checklist for Olympiad Readiness

Readiness is not defined by whether the platform works. It is defined by whether the process is defensible. Identity verification, slot control, monitoring rules, incident workflows, and certification logic must all be configured before launch.

If any of these are missing, the issue will not appear during setup. It will appear after results are released, when correction is no longer easy.

Governance, Results, and Post-Exam Responsibility

Results Are Claims That Must Be Proven

Every published result implies fairness and integrity. Without evidence, this claim is weak. If challenged, it cannot be supported.

This is why olympiad systems must be built with defensibility as a core outcome, not an afterthought.

Audit Trails Connect Evidence Across the Lifecycle

A valid audit trail is not a collection of logs. It is a connected record. Identity data, behavioural flags, session recordings, and review decisions must all link to the same candidate record.

If these exist in isolation, reconstruction becomes difficult and unreliable.

Analytics Enables Continuous Integrity Improvement

Integrity is not static. Exam insights allow organisations to identify patterns across exam cycles – unusual behaviours, high-risk slots, or systemic weaknesses.

This shifts the approach from reactive handling to proactive improvement.

ExamOnline: Built for Olympiad-Scale Assessment

ExamOnline manages the complete online olympiad exam lifecycle within a single, integrated platform, from registration and slot booking through proctoring, incident review, result processing, and certification. Every stage is connected, which means no data gaps, no tool-switching, and no reconciliation headaches at the end.

Organisations already running olympiad programs on existing systems can plug in ExamOnline’s proctoring layer without replacing their current infrastructure.

At scale, this matters. ExamOnline has delivered millions of exam sessions globally with consistent reliability – across academic, certification, and competitive assessment environments.

For olympiad organisers, conducting the exam is rarely the hard part. Defending the result is. ExamOnline is built so both are never in conflict.

What is online olympiad exam management?

It is the structured process of delivering competitive exams online, ensuring that identity, monitoring, evaluation, and certification are all connected within a single system.

Why is proctoring critical for olympiads?

Because results are comparative. Any integrity failure affects rankings across the cohort, not just one candidate.

Which proctoring model should be used?

AI proctoring works for large-scale rounds. Live proctoring is suited for high-stakes stages. The choice depends on volume and impact.

How is scale handled without compromising control?

Through concurrent session management, automated monitoring, and systems designed for parallel execution rather than sequential scaling.

What ensures a result is defensible?

A connected audit trail that includes identity verification, behavioural monitoring, session recording, and documented review decisions.